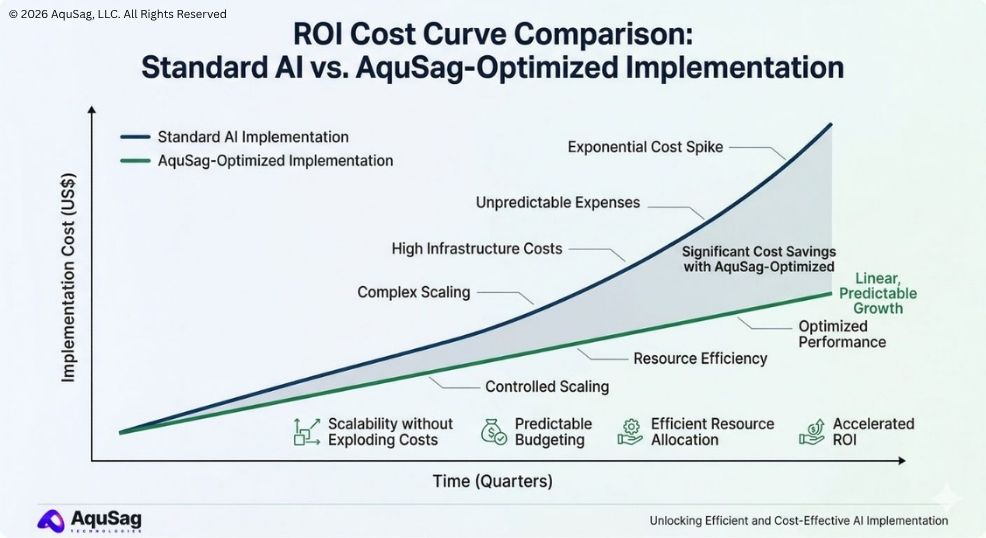

In the early days of the generative AI boom, the primary goal for most enterprises was simply "making it work." By early 2026, the conversation has shifted dramatically. While the potential of Large Language Models (LLMs) is undisputed, the "cost to serve" has become a major roadblock for scaling. Many companies that successfully deployed Autonomous Agentic AI Workflows are now looking at their monthly cloud bills with a sense of sticker shock.

At AquSag Technologies, we believe that high-performance AI should not be a luxury reserved only for the world's largest tech giants. Scaling your AI infrastructure shouldn't mean bankrupting your innovation budget. The secret to long-term Cost-Efficient AI Scaling lies in rigorous engineering and strategic architectural choices.

This guide outlines five proven strategies we use to help our clients reduce their AI operational expenses while actually improving system performance.

The Problem: The Hidden "Tax" on AI Innovation

When you move from a pilot project to a full-scale production environment, LLM inference costs grow exponentially. Every token generated by a model like Gemini 1.5 Pro or GPT-5 carries a price tag. If your system is inefficiently designed, you are essentially paying an "innovation tax" on every customer interaction.

The most expensive AI model is not the one with the highest token price; it is the one that is used for tasks a smaller model could have handled for a fraction of the cost.

For a company aiming to scale to $1M MRR and beyond, managing these costs is a survival skill. It requires a Technical Infrastructure Partner who understands that every millisecond of GPU time counts.

Strategy 1: Implement "Model Cascading" and Routing

Not every task requires a massive, multi-billion parameter model. Asking a top-tier LLM to categorize a simple customer support email is like using a rocket ship to cross the street.

How Model Cascading Works

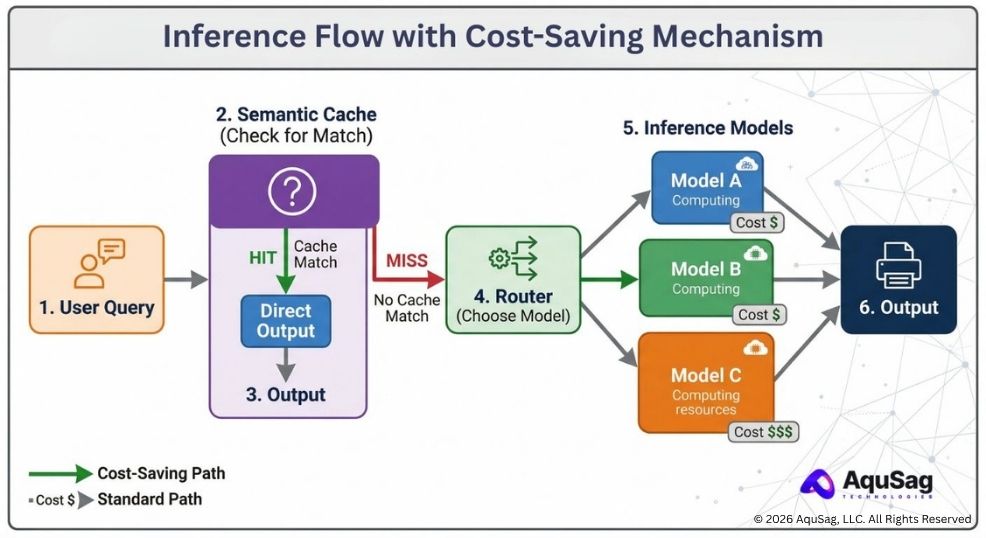

We build "routers" that analyze the complexity of an incoming request before it ever reaches a high-cost model.

- Level 1 (The Gatekeeper): A small, lightning-fast model (like Llama-3-8B or a specialized BERT model) classifies the intent.

- Level 2 (The Workhorse): If the task is standard (like basic data extraction), it goes to a mid-tier model.

- Level 3 (The Expert): Only truly complex reasoning tasks are passed to the most expensive, state-of-the-art models.

By implementing this hierarchy, our Managed Engineering Pods have helped clients reduce their total inference spend by up to 60 percent without any drop in the quality of the output.

Strategy 2: Specialized Fine-Tuning Over Large-Scale Prompting

Many developers try to improve AI performance by adding more and more "context" to the prompt. However, long prompts lead to high "input token" costs. In 2026, the smarter move is to use RLHF and Fine-Tuning Strategies to bake that context directly into a smaller model.

The Benefits of a Domain-Specific Model

When a model is fine-tuned on your specific data—whether it is legal documents or supply chain logs—it doesn't need a five-page instruction manual every time it runs. This allows you to use smaller models that perform as well as (or better than) larger models on your specific niche. This is a foundational step in Refactoring Legacy Code to API-First Microservices, as it allows your microservices to be lightweight and fast.

RLHF vs DPO: Choosing the Right Alignment Strategy

Strategy 3: Semantic Caching and Prompt Optimization

The most cost-effective token is the one you never have to generate. Most enterprise AI systems answer the same types of questions repeatedly.

The Power of Semantic Caching

Instead of sending every request to the LLM, we implement a "Semantic Cache." This system stores previous answers and uses "vector similarity" to see if a new question is functionally identical to one answered five minutes ago. If it is, we serve the cached answer instantly and for free.

Aggressive Prompt Pruning

Every word in your system prompt costs money. We audit prompts to remove redundant instructions. This ensures that your The Rise of GTM Engineering engines are running on the leanest possible "fuel," maximizing your sales ROI.

Strategy 4: GPU Orchestration and Quantization

Hardware is the final frontier of cost management. If you are running your own models, how you manage your GPUs is critical.

Understanding Quantization

Quantization is the process of reducing the precision of the numbers a model uses (for example, moving from 16-bit to 4-bit). This allows a model to run on much cheaper hardware or process twice as many requests on the same GPU.

Intelligent GPU Scaling

By providing Stability as a Service, we ensure that your server capacity scales up only when demand is high and scales down (or switches to "spot instances") when demand is low. This prevents you from paying for idle silicon. This also plays a major role in Green IT Audits and Carbon-Aware Computing, as it significantly reduces the energy waste of your AI stack.

Strategy 5: Federated Data Access (Data Mesh)

Believe it or not, your data architecture affects your AI costs. If an AI agent has to ingest a massive, unorganized database to find an answer, your token count will skyrocket.

By implementing a Data Mesh Architecture, you allow the AI to access clean, "pre-digested" data products. This means the AI spends less time "searching" and more time "answering," which keeps the context window small and the costs low.

Implementing a Data Mesh: A Technical Roadmap

The Role of Security in Cost Management

Security breaches are the ultimate "hidden cost." If a malicious actor finds a way to perform a "denial-of-wallet" attack by flooding your AI with expensive, recursive prompts, your budget could vanish overnight.

This is why we integrate AI Red-Teaming and Governance into our cost-optimization audits. We build guardrails that detect and block abusive prompt patterns before they reach your inference engine.

A secure AI system is a predictable AI system. A predictable AI system is a profitable one.

Why AquSag Technologies is the Leader in AI Efficiency

We are more than just a provider of talent; we are an Engineering Infrastructure Partner. We don't just care about "building a feature." We care about the long-term sustainability and profitability of your technical bench.

When you hire an AquSag Managed Engineering Pod, you are hiring experts who are obsessed with optimization. We bring the specialized subject matter expertise required to navigate the complex trade-offs between model accuracy and inference cost.

Our AI Optimization Services Include:

- Full AI Spend Audits and ROI Projections.

- Implementation of Model Routers and Semantic Caching.

- Custom LLM Fine-Tuning for model size reduction.

- GPU Orchestration and Cloud Infrastructure Hardening.

- Green IT Audits for energy-efficient AI operations.

- Secure API-First Microservices integration for AI.

Conclusion: Scale Your Intelligence, Not Your Costs

The transition to an AI-first economy shouldn't be a financial burden. With the right engineering strategies, you can deploy powerful Autonomous Agentic AI Workflows and lead-generation systems that are both effective and affordable.

At AquSag Technologies, we help you master the "unit economics" of AI. We provide the stable, expert resources you need to build a future-proof infrastructure that delivers massive value without the massive bill.

Ready to Optimize Your AI Infrastructure?

Stop overpaying for your inference and start scaling your innovation. If you are looking for a partner who can provide a secure technical bench and the engineering expertise to slash your AI costs, we are here to help.

Contact AquSag Technologies to Scale Your Cost-Efficient AI Today